Autonomous Agents - A Multipart Series about Manus, Anthropic MCP and other related technologies is sponsored by Agent.ai - Discover, connect with and hire AI agents to do useful things.

I will start by saying that I am not a technical expert on this topic, and this is not a technical teardown (that is not my forte). My expertise is in using technologies to solve real business problems and then to share my experience. Doing this I hope saves others the time and effort that goes into experimentation and it helps people understand where the technology is today and use cases it works with and those it does not work with.

I started my experimentation to get deeper into how autonomous agents work and Anthropic MCP is the closest to something that I can build an autonomous agent with (without being a programmer).

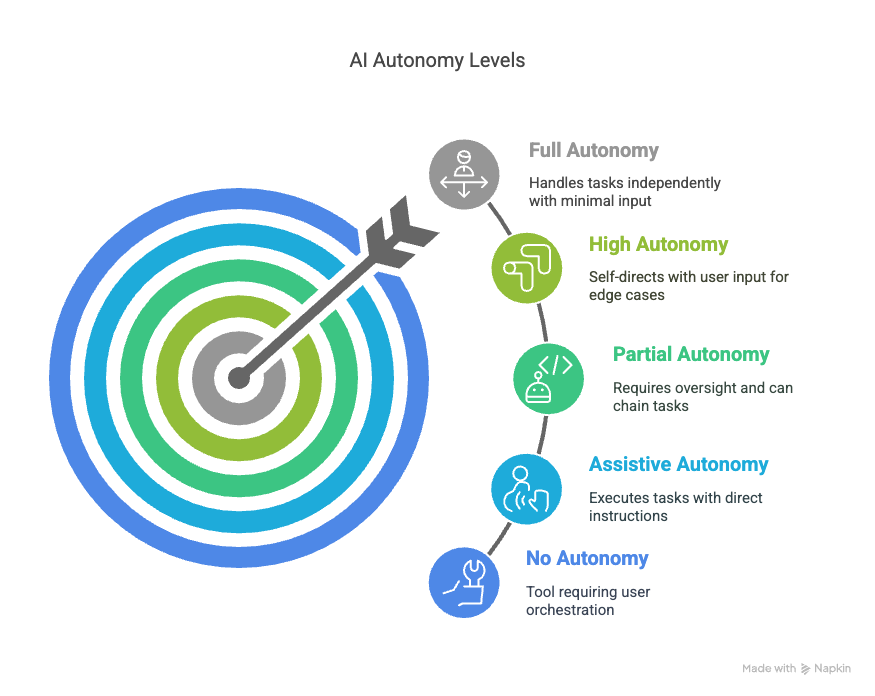

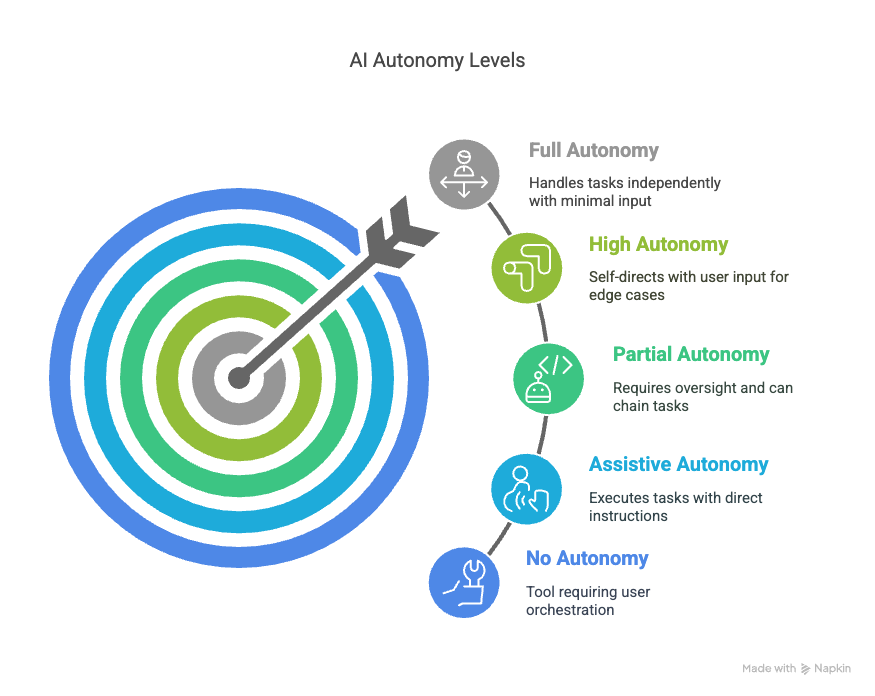

An artificial intelligence (AI) agent is a software program that can interact with its environment, collect data, and use the data to perform self-determined tasks to meet predetermined goals. Humans set goals, but an AI agent independently chooses the best actions it needs to perform to achieve those goals - https://aws.amazon.com/what-is/ai-agents/

While the traditional definition of an agent assumes autonomy, I think there is value in human defined agents and autonomous agents (my clarification on the definition).

So, let's start with the autonomous agent use case that I use Anthropic MCP with Agent.ai tools - Meeting Preparation. We have all been in this situation. We have a meeting with an executive and you have not had time to prepare for the meeting and you want that 1 pager. You want to get the following information -

LinkedIn research about the executive (profile and posts)

Company research about the executive (financial information and other details)

Latest news about the executive

Summary of any youtube videos they are on.

AND I want it in a nice document.

If it was completely autonomous, I would just say - "I am meeting with this Executive X at Company Y", please do some detailed research about this executive and generate a write up that will help me be prepared for an intro meeting.

Having done this many times, what I have noticed is that MCP tends to go through a trial and error process. So this is one of the cons of a completely autonomous agent. It will take your goal and make a determination of what steps it wants to take and then try them out and if a step does not work, it will try it in a different way.

This works great the first few times, when you are experimenting. At some point, you know what the steps are and you want it to execute the same way. You don't have this guarantee with an autonomous agent, as you don't control the execution flow.

To get around this I have started giving it very specific instructions on the tools it should use. I don't give it specifics on what to do. So here is what that looks like -

I have a meeting with "EXECUTIVE NAME", "TITLE" at "COMPANY". I would like you to use get_linkedin_profile, find_linkedin_profile, get_linkedin_activity, get_search_results to find any youtube videos he has been in, get_youtube_transcript for those videos, get_google_news to find latest news about him and then take all this information and use invoke_llm to generate a summary about EXECUTIVE NAME and COMPANY that will help me prepare for this meeting.

Depending on the company, I will sometimes ask it to use the get_company_object, company_financial_info and company_financial_profile to get more detailed company information.

Then I just run this and after a few minutes I have a well crafted output that is amazing at getting me prepared for my meeting. I did not have to build an agent in Agent.ai and learn how to use Agent.ai.

If anyone is interested in what this output looks like, here is one I did for an old high school friend of mine that I was meeting to talk about GTM - https://claude.site/artifacts/8f315ddc-5458-4321-80fa-4b334fba3cd9.

Here is a video where I show this process in action - https://youtu.be/4-80lrptO6g

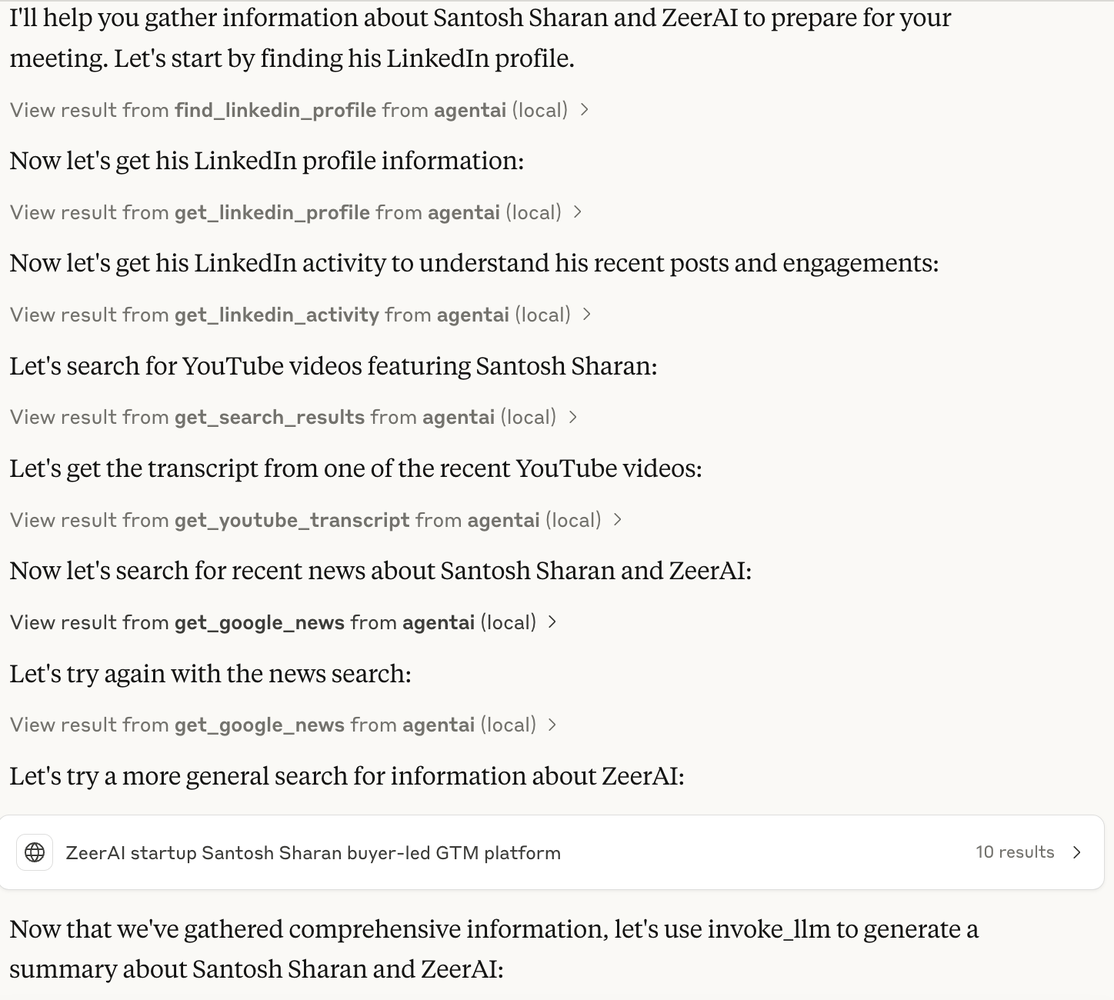

Here is a screenshot of the steps that Claude went through to achieve the goal it was given -

So in case you are wondering what the downsides are (FOR NOW), here are some that I have run into and I would love it if people can share if they have found workarounds for this -

When I am building an agent, the process is iterative and over time Claude starts telling me that the conversation is getting too long and eventually it stops working. At which point, I have to now start a new conversation and pass all the context to it.

As the conversations get long, you start running into usage limits with Sonnet 3.7 and when this happens, you cannot just switch to a lower model as that resets the session. So we are back to starting a new conversation. I know Anthropic just came out with a Max plan, that can cost $100-$200 per month as a way to address this.

You have to give it permission to execute the tools. So in the previous example, we are using 6 different agent.ai tools and I am asked for permission 6 times during the execution. Once I give it permission, it can run that tool in that session without asking for permission, but this does cause a little friction.

There is a lot being written about security and how this opens up new "attack vectors". Basically malicious actors could create MCP servers and make tools available that if you add to Claude, could cause issues. Every MCP server asks for tokens to connect to the underlying application (e.g. Hubspot token, Slack token etc.). Currently it is stored in a config file.

I have found Claude Desktop with MCP great for brainstorming a new agent, doing the trial and error, but I really think there needs to be a separation between design time and run time. Currently, once I have an autonomous agent working, if I want to run that agent multiple times, there is no easy way to get it to execute the same steps every time. What would be great is to have the ability to publish an agent and then just execute it again and again.

There are product specific MCP Servers (Salesforce, Hubspot, Slack, Google Drive etc.) that make a lot of sense, but I do see some of these being built by 3rd parties and not necessarily the vendors. What does this mean? Do I trust them? What happens as we see a proliferation of MCP Servers. How are they governed, discovered, secured? How will this affect performance? If my Claude Desktop is given access to 1000s of tools across many different products, what happens? What if there are conflicting tools?

Finding and deploying servers. Dharmesh Shah has written about this in his post, about how this requires working with GitHub repos and self hosting them.

So where does this leave us. I think as a v1 of what autonomous agents will look like it is pretty amazing. I do not build an agent any more without first vibing with Claude + MCP. I have it generate all the steps, then test each step and only then do I finally build it in Agent.ai. I also don't debug any agent without using Claude + MCP. So I see tremendous value in it helping me build agents / test agents. I also see tremendous value in certain use cases, like the Meeting Prep agent where the steps are pretty obvious and there isn't a lot happening to just use Claude Desktop and not build an agent at all.

My parting words are to get hands on with Claude Desktop and MCP, if you are in the business of building agents, this is something you should absolutely try out.