Anthropic Agentic Systems: A Five-Part Exploration is sponsored by Agent.ai - Discover, connect with and hire AI agents to do useful things.

I had posted the other day about hiring for programming jobs being down. There have been a lot of articles written about this

In other words, less-experienced, entry-level coders have fewer job opportunities in an AI-infused technology environment. Overall, open software engineering jobs have declined from over 100,000 in 2022 to about 60,000 today, with front-end jobs seeing little to no growth in the past five years.

That being said, agents are emerging as the new software layer and there are a growing number of Agent Builder jobs and people can leverage low-code products like Agent.ai to learn and build agents. As someone who has monetized agent building I can vouch for this from personal experience.

To help demystify agent building - This is the 4th edition of the newsletter covering the five patterns for Agentic Systems that Anthropic is seeing in production.

Orchestrator-Workers

Evaluator-Optimizer

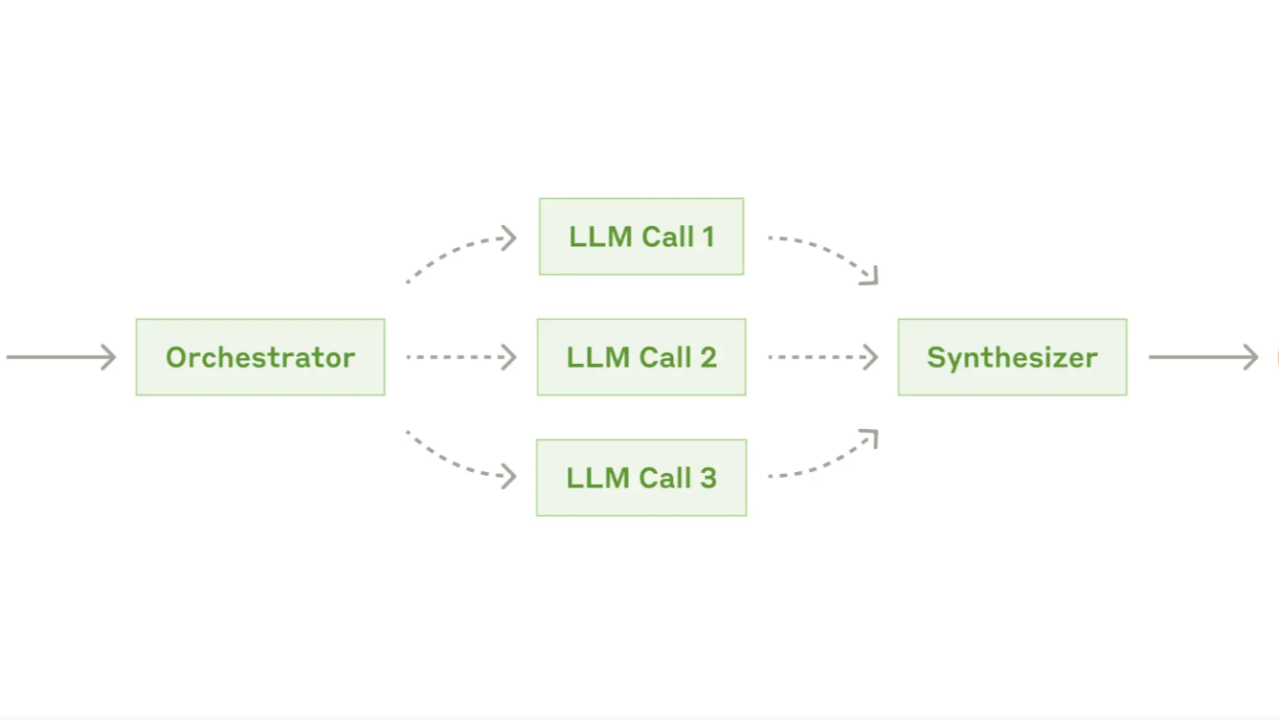

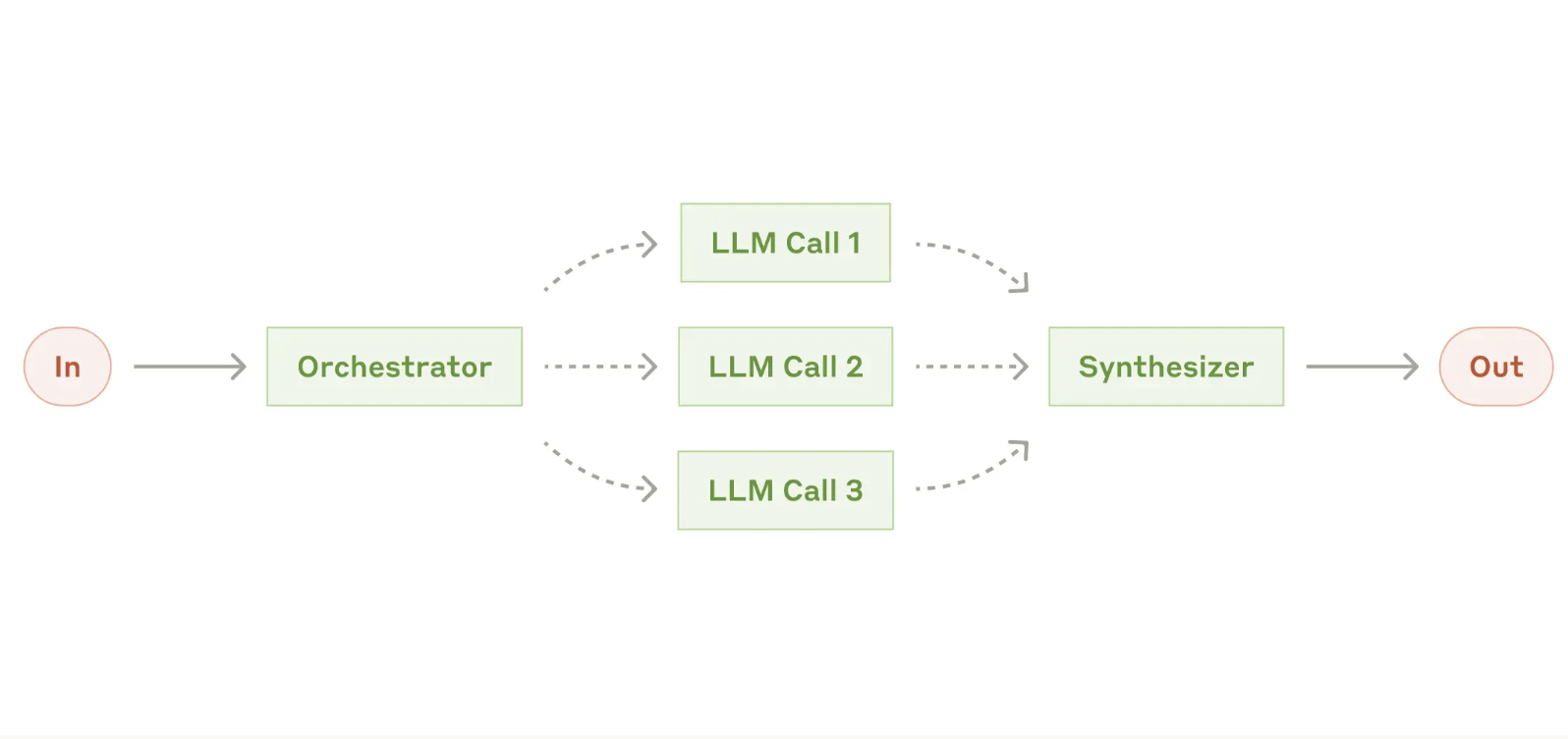

Anthropic does a great job explaining what the Orchestrator-Workers pattern is and this is the first pattern we start moving away from a tradition hard-coded workflow to something that can only be done with an LLM that can reason and plan. While not completely autonomous it start bringing in some autonomy where the agent starts making some decisions on how to route work to and who to route work to.

In the orchestrator-workers workflow, a central LLM dynamically breaks down tasks, delegates them to worker LLMs, and synthesizes their results. This workflow is well-suited for complex tasks where you can’t predict the subtasks needed

The last sentence in the paragraph above - "where you can't predict the subtasks needed" is the key to this pattern. If you can't predict you as the builder cannot decide what should happen.

Here is a visual representation of the pattern

The first thing that stands out is that this looks deceptively similar to the "Parallelization" pattern.

Whereas it’s topographically similar, the key difference from parallelization is its flexibility—subtasks aren't pre-defined, but determined by the orchestrator based on the specific input.

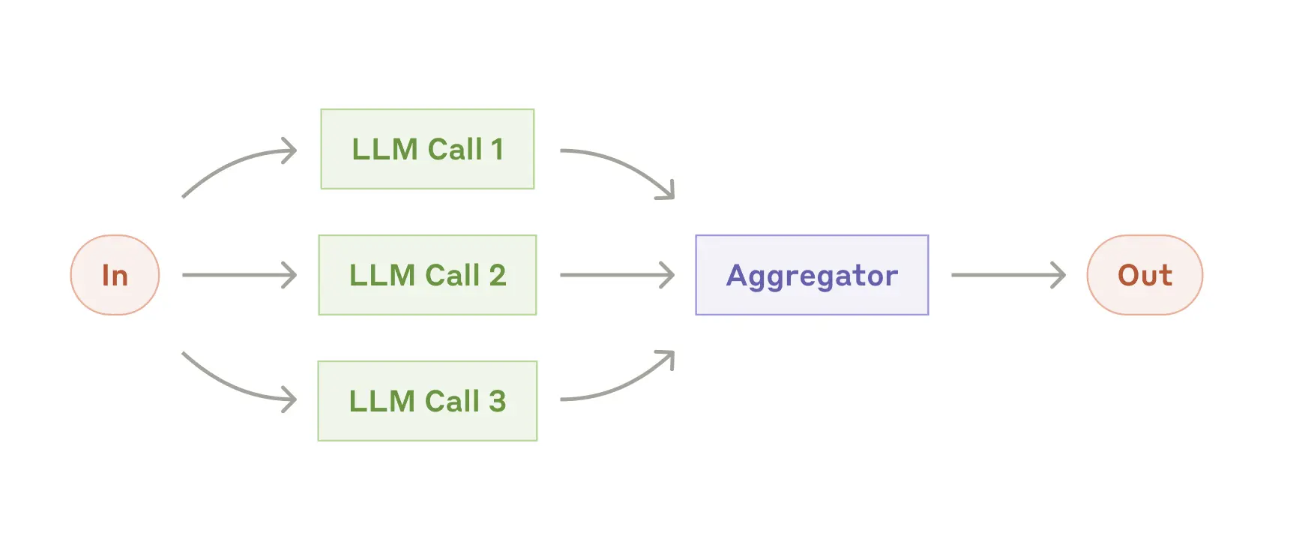

So now that we have got the theory out of the way, how can we apply this pattern to our Superhero story. In my previous example, I had taken the LinkedIn profile of a person and then executed 3 LLM actions that generated -

Task 1 : Generates a Superhero Costume

Task 2 : Generates an Archvillain

Task 3 : Generates a weakness for the Superhero

Then we aggregated all this into one output.

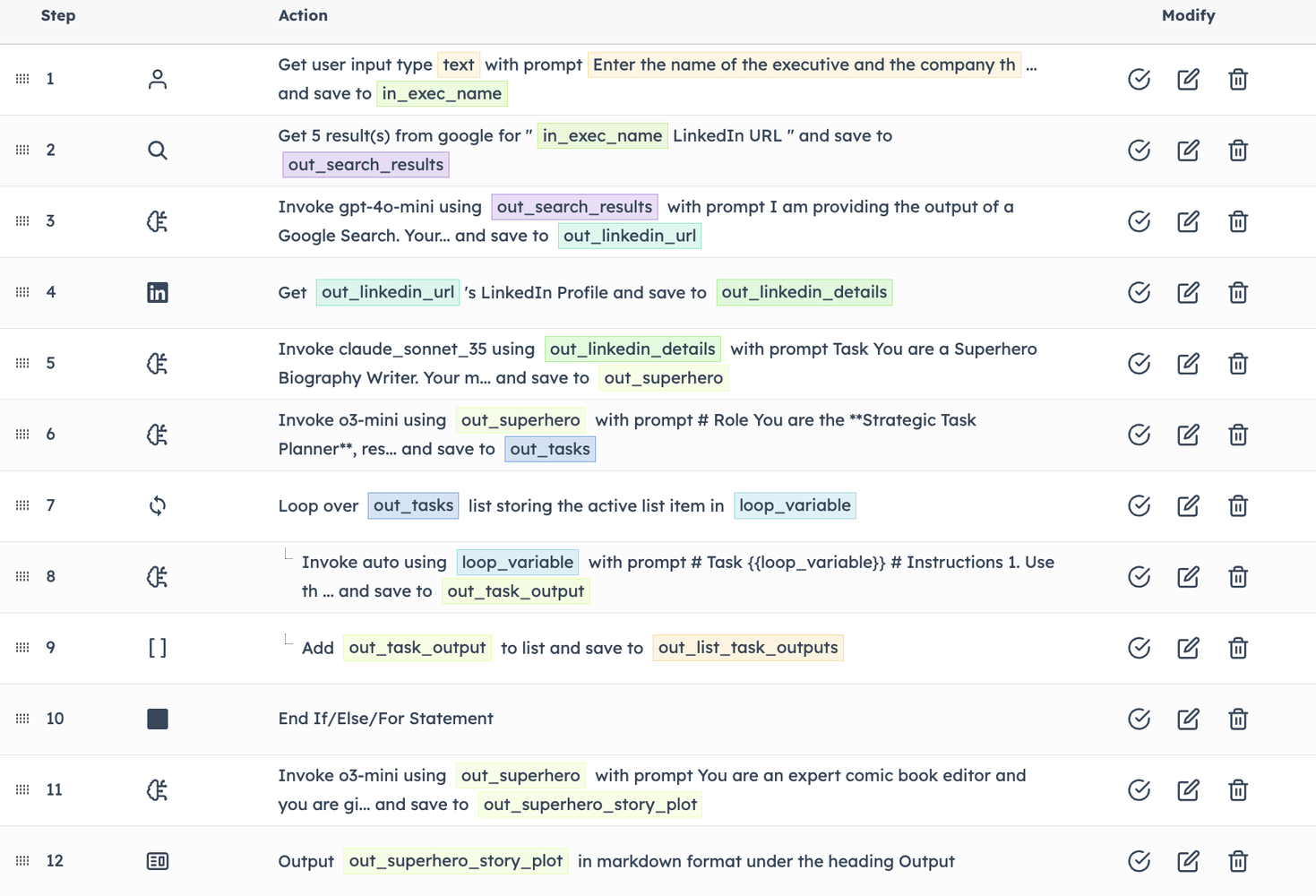

For this example, we will tell the LLM that they are responsible for generating a Superhero story and need to break down the task into subtasks and generate prompts that are then sent to LLMs to execute the tasks and then have a synthesizer task put the story back together.

This gets into a pattern called Meta-Prompting, where we don't define the prompts but we write a prompt that using some inputs comes up with tasks and prompts.

The prompt we are going to give the Orchestrator is to take a Superhero Bio generated from a LinkedIn profile and use that to flush out other elements that are key to a superhero story.

Here is a visual of the agent and link for those that want to give it a spin.

Here is the prompt for the Orchestrator

You will notice that nowhere do we specify what tasks it should perform. We give it an objective and instructions but the orchestrator is responsible for coming up with the tasks.

Here are the list of tasks it came up with

You will notice that it came up with someone to write a background story, someone to design gear, a villain, supporting cast and scene and setting. I did not tell it to do any of this. These are then generated as prompts that are executed later.

For those of us using reasoning models like DeepSeek R1 or O1 Pro or o3 mini or DeepResearch, this is the kind of stuff that the LLM is doing under the covers. It's taking a task and then breaking it down dynamically into subtasks based on the goals, objectives and context and then it collates and returns the response.

We then use a For Loop to loop through each task and run them one by one. Again, since we don't know how many tasks there will be and we cannot hardcode the tasks we need a flexible way to create a list of tasks and then run these one by one dynamically.

This is the final output -

Hope this example gives you an idea of the power of building agents and agentic systems and this inspires you sign up for Agent.ai and build some agents.